BackgroundPolice Live Facial Recognition (LFR) operates as a biometric checkpoint. Vans equipped with facial recognition cameras scan the faces of anyone passing within roughly 50 metres, capturing biometric data without consent. In effect, people are treated as suspects, placed into a digital lineup against a secret police watchlist. If flagged, individuals are stopped and often detained, sometimes in handcuffs. Although police signage claims there is “no legal requirement” to pass through LFR, practice suggests otherwise. Plainclothes officers have been stationed nearby to intercept those who avoid the cameras. People who cover their faces have been stopped and compelled to submit to scans, with at least one individual fined £90 for objecting (Romford, May 2019). Choosing not to be scanned can itself trigger suspicion. InaccuracyIndependent analysis of police data from 2016–2023 indicates the system is wrong in around 85% of cases where it flags someone -misidentifying innocent people as suspects. Police claim a far lower false alert rate of 1 in 6,000, but this figure relies on a misleading method. Instead of measuring how many stops are incorrect, they divide incorrect stops by the total number of passers-by. For example, if 10 people are stopped and all 10 are innocent, the true error rate is 100%. Yet if 25,000 people walked past, the police report it as 0.4%, masking a failed deployment. Despite this, inspectors leading LFR operations have described the system as “flawless.” In practice, those flagged must often prove their innocence. The burden of proof against a “flawless system” can be incredibly high. Even valid ID may not be accepted, with some individuals required to provide fingerprints or other biometric data (Oxford Circus, Jan 2022). Recent cases highlight the risks: - A Black man travelling from Croydon was detained for 30 minutes despite presenting multiple IDs; he is now pursuing legal action (Jan 2026).

- South Wales Police paid damages to a Black man wrongly arrested and held for 13 hours after being identified by facial recognition (Jan 2026).

- In Feb 2026, an Asian man was arrested for a burglary 100 miles away despite clear physical differences from the suspect. The CCTV image showed someone much younger, lighter-skinned, clean-shaven, with different facial features. Despite this, officers relied on the LFR match over their own judgement – raising serious questions about claims that the technology is meaningfully subject to human oversight.

Racial BiasFreedom of Information data shows stark racial bias in the technology: in London in 2025, 80% of misidentifications involved Black individuals. Last month Essex police suspended the use of LFR technology after a study by Cambridge University, that they commissioned, found the technology disproportionately targets Black people. IneffectivenessClaims that LFR reduces crime are not supported by evidence. A University of Cambridge study (March 2026) found no statistically significant reduction in crime before, during, or after deployments. Systemic ConcernsOpposition to LFR is not only about errors or bias, but about its role as a tool of control – one that disproportionately impacts marginalised communities. In the context of documented institutional racism in policing (Casey Review, 2023), such technologies will inevitably produce racist outcomes. For example, tasers work accurately on all races, but they are used disproportionately on people of colour (8x more in 2019/20). Monitoring by the Resistance Kitchen in Croydon (Sept 2024) found clear evidence of systemic racial disparities: - 83% of stops were wrongful.

- 100% of Black individuals stopped were innocent, compared to 67% of white individuals.

- All Black individuals stopped by white officers were immediately handcuffed before any checks were carried out, despite full cooperation, while no white individuals were handcuffed prior to checks.

- Black individuals were also detained for significantly longer – over twice as long.

Expansion of SurveillancePolice databases used for facial recognition have expanded beyond the Police National Database (20 million images). FOI disclosures reveal access to passport and immigration databases (around 150 million images), without public or parliamentary approval. Usage of passport data increased more than 200-fold between 2020 and 2023. Under new legislation (Crime and Policing Bill), DVLA driving licence images – covering around 50 million people – may also be included. Predictive PolicingLFR has been used at peaceful protests, with watchlists including individuals not suspected of crimes but considered undesirable attendees. In some cases, people are added based on predictions they may commit offences in the future. Guidance from the College of Policing explicitly allows this. It states that watchlists can include individuals who police “suspect… are about to commit an offence,” as well as their “close associates,” even where those associates are not suspected of any wrongdoing in the past, the present or the future. This raises serious concerns about freedom of expression and assembly, and risks embedding historic biases into future policing. Local Impact: CroydonIn 2025, police LFR scanned 8.6 million faces, with nearly half in London. Deployments are concentrated in areas with higher Black populations (London Assembly study, 2025). Croydon has the largest Black population in London, and within Croydon, Thornton Heath – where over 40% of residents are Black – has been heavily targeted. It is therefore no coincidence that Croydon town centre and Thornton Heath are among the most surveilled areas, while predominantly white areas such as South Croydon have seen no LFR deployment. With 15 permanent LFR cameras installed (Oct 2025), Croydon is now the most surveilled borough in the UK for facial recognition – exceeding the total number of deployments in Westminster and Newham combined, the next two highest boroughs. Police claim they have community backing for LFR, but this support is largely manufactured – relying on organisations funded by MOPAC that depend on Met approval to survive, effectively rubber-stamping endorsement. This is coupled with messaging that fuels fear about violent crime operating unchecked without LFR cameras. No community risk assessment was conducted prior to installing permanent LFR cameras in Croydon. Call to ActionThis level of surveillance is unacceptable. Over 20 community organisations in Croydon have called for an end to LFR and submitted evidence to the London Policing Ethics Panel as part of its review of the Metropolitan Police’s use of the technology -yet these concerns have been ignored. Other councils, including Islington, Newham, and Hackney, have passed motions opposing LFR, citing an independent legal review (Matrix Chambers, June 2022) which found the current legal framework “unfit for purpose.” Croydon must join them. As Newham councillor Areeq Chowdhury warned: dystopian futures are not imposed overnight – they are built gradually, through small, seemingly minor changes, until fundamental rights we once took for granted become impossible to exercise. More Information: |

Croydon Unites For Palestine

Croydon Unites For Palestine

Croydon Council Suppresses Public Scrutiny Of Investment in Elbit Systems

Croydon Council Suppresses Public Scrutiny Of Investment in Elbit Systems

Croydon Demands Council Divest From Genocide

Croydon Demands Council Divest From Genocide

Croydon Divest – Protest Pension Committee Head

Croydon Divest – Protest Pension Committee Head

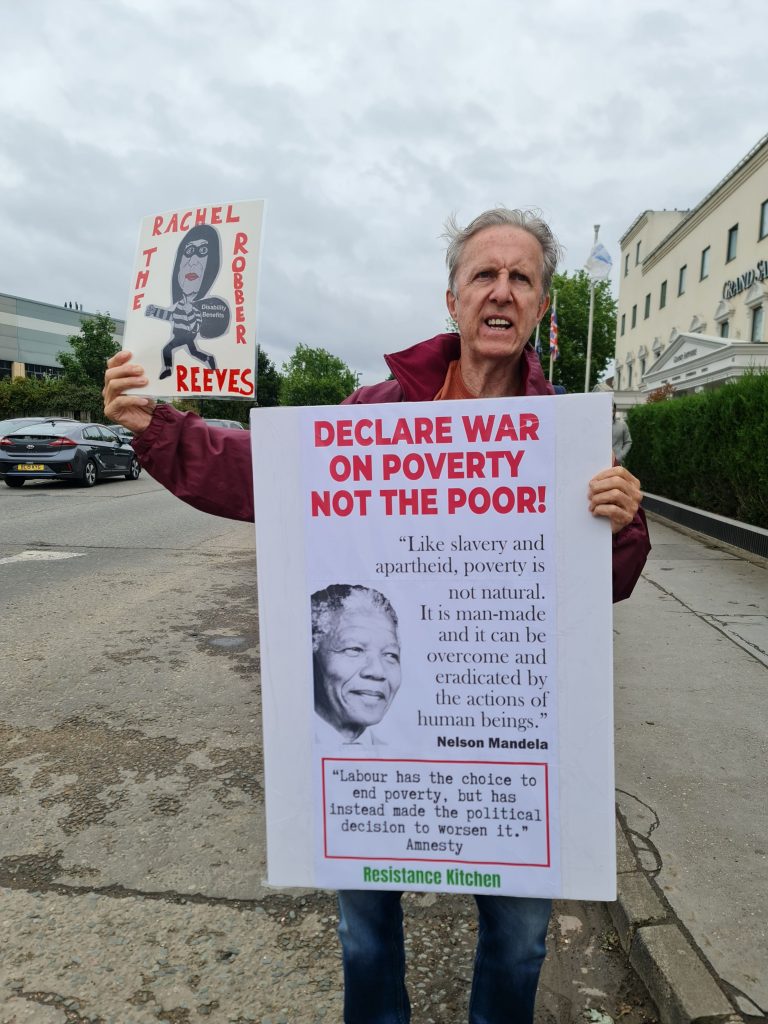

Protest Croydon Labour Party Fundraiser

Protest Croydon Labour Party Fundraiser

Help Send 1000 Letters – Croydon Council Divest From Genocide

Help Send 1000 Letters – Croydon Council Divest From Genocide

Croydon Divest Coalition Challenges Council Over Genocide Investment

Croydon Divest Coalition Challenges Council Over Genocide Investment

Croydon Divest – Letter To Council

Croydon Divest – Letter To Council

We Demand Croydon Council Divest From Genocide

We Demand Croydon Council Divest From Genocide

Community Demands Police Stop Facial Recognition Surveillance

Community Demands Police Stop Facial Recognition Surveillance

Met’s Lead On Facial Recognition – Insightful Exchange

Met’s Lead On Facial Recognition – Insightful Exchange

Stop Police Facial Recognition Surveillance

Stop Police Facial Recognition Surveillance